Merge branch 'develop' into develop

This commit is contained in:

commit

fee2c17bf7

|

|

@ -3,10 +3,8 @@ English | [简体中文](README_cn.md)

|

|||

## Introduction

|

||||

PaddleOCR aims to create rich, leading, and practical OCR tools that help users train better models and apply them into practice.

|

||||

|

||||

**Live stream on coming day**: July 21, 2020 at 8 pm BiliBili station live stream

|

||||

|

||||

**Recent updates**

|

||||

|

||||

- 2020.7.23, Release the playback and PPT of live class on BiliBili station, PaddleOCR Introduction, [address](https://aistudio.baidu.com/aistudio/course/introduce/1519)

|

||||

- 2020.7.15, Add mobile App demo , support both iOS and Android ( based on easyedge and Paddle Lite)

|

||||

- 2020.7.15, Improve the deployment ability, add the C + + inference , serving deployment. In addtion, the benchmarks of the ultra-lightweight OCR model are provided.

|

||||

- 2020.7.15, Add several related datasets, data annotation and synthesis tools.

|

||||

|

|

@ -214,3 +212,4 @@ We welcome all the contributions to PaddleOCR and appreciate for your feedback v

|

|||

- Many thanks to [lyl120117](https://github.com/lyl120117) for contributing the code for printing the network structure.

|

||||

- Thanks [xiangyubo](https://github.com/xiangyubo) for contributing the handwritten Chinese OCR datasets.

|

||||

- Thanks [authorfu](https://github.com/authorfu) for contributing Android demo and [xiadeye](https://github.com/xiadeye) contributing iOS demo, respectively.

|

||||

- Thanks [BeyondYourself](https://github.com/BeyondYourself) for contributing many great suggestions and simplifying part of the code style.

|

||||

|

|

|

|||

|

|

@ -3,9 +3,8 @@

|

|||

## 简介

|

||||

PaddleOCR旨在打造一套丰富、领先、且实用的OCR工具库,助力使用者训练出更好的模型,并应用落地。

|

||||

|

||||

**直播预告:2020年7月21日晚8点B站直播,PaddleOCR开源大礼包全面解读,直播地址当天更新**

|

||||

|

||||

**近期更新**

|

||||

- 2020.7.23 发布7月21日B站直播课回放和PPT,PaddleOCR开源大礼包全面解读,[获取地址](https://aistudio.baidu.com/aistudio/course/introduce/1519)

|

||||

- 2020.7.15 添加基于EasyEdge和Paddle-Lite的移动端DEMO,支持iOS和Android系统

|

||||

- 2020.7.15 完善预测部署,添加基于C++预测引擎推理、服务化部署和端侧部署方案,以及超轻量级中文OCR模型预测耗时Benchmark

|

||||

- 2020.7.15 整理OCR相关数据集、常用数据标注以及合成工具

|

||||

|

|

@ -206,8 +205,9 @@ PaddleOCR文本识别算法的训练和使用请参考文档教程中[模型训

|

|||

## 贡献代码

|

||||

我们非常欢迎你为PaddleOCR贡献代码,也十分感谢你的反馈。

|

||||

|

||||

- 非常感谢 [Khanh Tran](https://github.com/xxxpsyduck) 贡献了英文文档。

|

||||

- 非常感谢 [Khanh Tran](https://github.com/xxxpsyduck) 贡献了英文文档

|

||||

- 非常感谢 [zhangxin](https://github.com/ZhangXinNan)([Blog](https://blog.csdn.net/sdlypyzq)) 贡献新的可视化方式、添加.gitgnore、处理手动设置PYTHONPATH环境变量的问题

|

||||

- 非常感谢 [lyl120117](https://github.com/lyl120117) 贡献打印网络结构的代码

|

||||

- 非常感谢 [xiangyubo](https://github.com/xiangyubo) 贡献手写中文OCR数据集

|

||||

- 非常感谢 [authorfu](https://github.com/authorfu) 贡献Android和[xiadeye](https://github.com/xiadeye) 贡献IOS的demo代码

|

||||

- 非常感谢 [BeyondYourself](https://github.com/BeyondYourself) 给PaddleOCR提了很多非常棒的建议,并简化了PaddleOCR的部分代码风格。

|

||||

|

|

|

|||

|

|

@ -1,6 +1,6 @@

|

|||

# 如何快速测试

|

||||

### 1. 安装最新版本的Android Studio

|

||||

可以从https://developer.android.com/studio下载。本Demo使用是4.0版本Android Studio编写。

|

||||

可以从https://developer.android.com/studio 下载。本Demo使用是4.0版本Android Studio编写。

|

||||

|

||||

### 2. 按照NDK 20 以上版本

|

||||

Demo测试的时候使用的是NDK 20b版本,20版本以上均可以支持编译成功。

|

||||

|

|

|

|||

|

|

@ -3,11 +3,11 @@ import java.security.MessageDigest

|

|||

apply plugin: 'com.android.application'

|

||||

|

||||

android {

|

||||

compileSdkVersion 28

|

||||

compileSdkVersion 29

|

||||

defaultConfig {

|

||||

applicationId "com.baidu.paddle.lite.demo.ocr"

|

||||

minSdkVersion 15

|

||||

targetSdkVersion 28

|

||||

minSdkVersion 23

|

||||

targetSdkVersion 29

|

||||

versionCode 1

|

||||

versionName "1.0"

|

||||

testInstrumentationRunner "android.support.test.runner.AndroidJUnitRunner"

|

||||

|

|

@ -39,9 +39,8 @@ android {

|

|||

|

||||

dependencies {

|

||||

implementation fileTree(include: ['*.jar'], dir: 'libs')

|

||||

implementation 'com.android.support:appcompat-v7:28.0.0'

|

||||

implementation 'com.android.support.constraint:constraint-layout:1.1.3'

|

||||

implementation 'com.android.support:design:28.0.0'

|

||||

implementation 'androidx.appcompat:appcompat:1.1.0'

|

||||

implementation 'androidx.constraintlayout:constraintlayout:1.1.3'

|

||||

testImplementation 'junit:junit:4.12'

|

||||

androidTestImplementation 'com.android.support.test:runner:1.0.2'

|

||||

androidTestImplementation 'com.android.support.test.espresso:espresso-core:3.0.2'

|

||||

|

|

|

|||

|

|

@ -14,10 +14,10 @@

|

|||

android:roundIcon="@mipmap/ic_launcher_round"

|

||||

android:supportsRtl="true"

|

||||

android:theme="@style/AppTheme">

|

||||

<!-- to test MiniActivity, change this to com.baidu.paddle.lite.demo.ocr.MiniActivity -->

|

||||

<activity android:name="com.baidu.paddle.lite.demo.ocr.MainActivity">

|

||||

<intent-filter>

|

||||

<action android:name="android.intent.action.MAIN"/>

|

||||

|

||||

<category android:name="android.intent.category.LAUNCHER"/>

|

||||

</intent-filter>

|

||||

</activity>

|

||||

|

|

@ -25,6 +25,15 @@

|

|||

android:name="com.baidu.paddle.lite.demo.ocr.SettingsActivity"

|

||||

android:label="Settings">

|

||||

</activity>

|

||||

<provider

|

||||

android:name="androidx.core.content.FileProvider"

|

||||

android:authorities="com.baidu.paddle.lite.demo.ocr.fileprovider"

|

||||

android:exported="false"

|

||||

android:grantUriPermissions="true">

|

||||

<meta-data

|

||||

android:name="android.support.FILE_PROVIDER_PATHS"

|

||||

android:resource="@xml/file_paths"></meta-data>

|

||||

</provider>

|

||||

</application>

|

||||

|

||||

</manifest>

|

||||

|

|

@ -30,7 +30,7 @@ Java_com_baidu_paddle_lite_demo_ocr_OCRPredictorNative_init(JNIEnv *env, jobject

|

|||

}

|

||||

|

||||

/**

|

||||

* "LITE_POWER_HIGH" 转为 paddle::lite_api::LITE_POWER_HIGH

|

||||

* "LITE_POWER_HIGH" convert to paddle::lite_api::LITE_POWER_HIGH

|

||||

* @param cpu_mode

|

||||

* @return

|

||||

*/

|

||||

|

|

|

|||

|

|

@ -37,7 +37,7 @@ int OCR_PPredictor::init_from_file(const std::string &det_model_path, const std:

|

|||

return RETURN_OK;

|

||||

}

|

||||

/**

|

||||

* 调试用,保存第一步的框选结果

|

||||

* for debug use, show result of First Step

|

||||

* @param filter_boxes

|

||||

* @param boxes

|

||||

* @param srcimg

|

||||

|

|

|

|||

|

|

@ -12,26 +12,26 @@

|

|||

namespace ppredictor {

|

||||

|

||||

/**

|

||||

* 配置

|

||||

* Config

|

||||

*/

|

||||

struct OCR_Config {

|

||||

int thread_num = 4; // 线程数

|

||||

int thread_num = 4; // Thread num

|

||||

paddle::lite_api::PowerMode mode = paddle::lite_api::LITE_POWER_HIGH; // PaddleLite Mode

|

||||

};

|

||||

|

||||

/**

|

||||

* 一个四边形内图片的推理结果,

|

||||

* PolyGone Result

|

||||

*/

|

||||

struct OCRPredictResult {

|

||||

std::vector<int> word_index; //

|

||||

std::vector<int> word_index;

|

||||

std::vector<std::vector<int>> points;

|

||||

float score;

|

||||

};

|

||||

|

||||

/**

|

||||

* OCR 一共有2个模型进行推理,

|

||||

* 1. 使用第一个模型(det),框选出多个四边形

|

||||

* 2. 从原图从抠出这些多边形,使用第二个模型(rec),获取文本

|

||||

* OCR there are 2 models

|

||||

* 1. First model(det),select polygones to show where are the texts

|

||||

* 2. crop from the origin images, use these polygones to infer

|

||||

*/

|

||||

class OCR_PPredictor : public PPredictor_Interface {

|

||||

public:

|

||||

|

|

@ -50,7 +50,7 @@ public:

|

|||

int init(const std::string &det_model_content, const std::string &rec_model_content);

|

||||

int init_from_file(const std::string &det_model_path, const std::string &rec_model_path);

|

||||

/**

|

||||

* 返回OCR结果

|

||||

* Return OCR result

|

||||

* @param dims

|

||||

* @param input_data

|

||||

* @param input_len

|

||||

|

|

@ -69,7 +69,7 @@ public:

|

|||

private:

|

||||

|

||||

/**

|

||||

* 从第一个模型的结果中计算有文字的四边形

|

||||

* calcul Polygone from the result image of first model

|

||||

* @param pred

|

||||

* @param output_height

|

||||

* @param output_width

|

||||

|

|

@ -81,7 +81,7 @@ private:

|

|||

const cv::Mat &origin);

|

||||

|

||||

/**

|

||||

* 第二个模型的推理

|

||||

* infer for second model

|

||||

*

|

||||

* @param boxes

|

||||

* @param origin

|

||||

|

|

@ -91,14 +91,14 @@ private:

|

|||

infer_rec(const std::vector<std::vector<std::vector<int>>> &boxes, const cv::Mat &origin);

|

||||

|

||||

/**

|

||||

* 第二个模型提取文字的后处理

|

||||

* Postprocess or sencod model to extract text

|

||||

* @param res

|

||||

* @return

|

||||

*/

|

||||

std::vector<int> postprocess_rec_word_index(const PredictorOutput &res);

|

||||

|

||||

/**

|

||||

* 计算第二个模型的文字的置信度

|

||||

* calculate confidence of second model text result

|

||||

* @param res

|

||||

* @return

|

||||

*/

|

||||

|

|

|

|||

|

|

@ -7,7 +7,7 @@

|

|||

namespace ppredictor {

|

||||

|

||||

/**

|

||||

* PaddleLite Preditor 通用接口

|

||||

* PaddleLite Preditor Common Interface

|

||||

*/

|

||||

class PPredictor_Interface {

|

||||

public:

|

||||

|

|

@ -21,7 +21,7 @@ public:

|

|||

};

|

||||

|

||||

/**

|

||||

* 通用推理

|

||||

* Common Predictor

|

||||

*/

|

||||

class PPredictor : public PPredictor_Interface {

|

||||

public:

|

||||

|

|

@ -33,9 +33,9 @@ public:

|

|||

}

|

||||

|

||||

/**

|

||||

* 初始化paddlitelite的opt模型,nb格式,与init_paddle二选一

|

||||

* init paddlitelite opt model,nb format ,or use ini_paddle

|

||||

* @param model_content

|

||||

* @return 0 目前是固定值0, 之后其他值表示失败

|

||||

* @return 0

|

||||

*/

|

||||

virtual int init_nb(const std::string &model_content);

|

||||

|

||||

|

|

|

|||

|

|

@ -21,10 +21,10 @@ public:

|

|||

const std::vector<std::vector<uint64_t>> get_lod() const;

|

||||

const std::vector<int64_t> get_shape() const;

|

||||

|

||||

std::vector<float> data; // 通常是float返回,与下面的data_int二选一

|

||||

std::vector<int> data_int; // 少数层是int返回,与 data二选一

|

||||

std::vector<int64_t> shape; // PaddleLite输出层的shape

|

||||

std::vector<std::vector<uint64_t>> lod; // PaddleLite输出层的lod

|

||||

std::vector<float> data; // return float, or use data_int

|

||||

std::vector<int> data_int; // several layers return int ,or use data

|

||||

std::vector<int64_t> shape; // PaddleLite output shape

|

||||

std::vector<std::vector<uint64_t>> lod; // PaddleLite output lod

|

||||

|

||||

private:

|

||||

std::unique_ptr<const paddle::lite_api::Tensor> _tensor;

|

||||

|

|

|

|||

|

|

@ -19,15 +19,16 @@ package com.baidu.paddle.lite.demo.ocr;

|

|||

import android.content.res.Configuration;

|

||||

import android.os.Bundle;

|

||||

import android.preference.PreferenceActivity;

|

||||

import android.support.annotation.LayoutRes;

|

||||

import android.support.annotation.Nullable;

|

||||

import android.support.v7.app.ActionBar;

|

||||

import android.support.v7.app.AppCompatDelegate;

|

||||

import android.support.v7.widget.Toolbar;

|

||||

import android.view.MenuInflater;

|

||||

import android.view.View;

|

||||

import android.view.ViewGroup;

|

||||

|

||||

import androidx.annotation.LayoutRes;

|

||||

import androidx.annotation.Nullable;

|

||||

import androidx.appcompat.app.ActionBar;

|

||||

import androidx.appcompat.app.AppCompatDelegate;

|

||||

import androidx.appcompat.widget.Toolbar;

|

||||

|

||||

/**

|

||||

* A {@link PreferenceActivity} which implements and proxies the necessary calls

|

||||

* to be used with AppCompat.

|

||||

|

|

|

|||

|

|

@ -3,23 +3,22 @@ package com.baidu.paddle.lite.demo.ocr;

|

|||

import android.Manifest;

|

||||

import android.app.ProgressDialog;

|

||||

import android.content.ContentResolver;

|

||||

import android.content.Context;

|

||||

import android.content.Intent;

|

||||

import android.content.SharedPreferences;

|

||||

import android.content.pm.PackageManager;

|

||||

import android.database.Cursor;

|

||||

import android.graphics.Bitmap;

|

||||

import android.graphics.BitmapFactory;

|

||||

import android.media.ExifInterface;

|

||||

import android.net.Uri;

|

||||

import android.os.Bundle;

|

||||

import android.os.Environment;

|

||||

import android.os.Handler;

|

||||

import android.os.HandlerThread;

|

||||

import android.os.Message;

|

||||

import android.preference.PreferenceManager;

|

||||

import android.provider.MediaStore;

|

||||

import android.support.annotation.NonNull;

|

||||

import android.support.v4.app.ActivityCompat;

|

||||

import android.support.v4.content.ContextCompat;

|

||||

import android.support.v7.app.AppCompatActivity;

|

||||

import android.text.method.ScrollingMovementMethod;

|

||||

import android.util.Log;

|

||||

import android.view.Menu;

|

||||

|

|

@ -29,9 +28,17 @@ import android.widget.ImageView;

|

|||

import android.widget.TextView;

|

||||

import android.widget.Toast;

|

||||

|

||||

import androidx.annotation.NonNull;

|

||||

import androidx.appcompat.app.AppCompatActivity;

|

||||

import androidx.core.app.ActivityCompat;

|

||||

import androidx.core.content.ContextCompat;

|

||||

import androidx.core.content.FileProvider;

|

||||

|

||||

import java.io.File;

|

||||

import java.io.IOException;

|

||||

import java.io.InputStream;

|

||||

import java.text.SimpleDateFormat;

|

||||

import java.util.Date;

|

||||

|

||||

public class MainActivity extends AppCompatActivity {

|

||||

private static final String TAG = MainActivity.class.getSimpleName();

|

||||

|

|

@ -69,6 +76,7 @@ public class MainActivity extends AppCompatActivity {

|

|||

protected float[] inputMean = new float[]{};

|

||||

protected float[] inputStd = new float[]{};

|

||||

protected float scoreThreshold = 0.1f;

|

||||

private String currentPhotoPath;

|

||||

|

||||

protected Predictor predictor = new Predictor();

|

||||

|

||||

|

|

@ -368,18 +376,56 @@ public class MainActivity extends AppCompatActivity {

|

|||

}

|

||||

|

||||

private void takePhoto() {

|

||||

Intent takePhotoIntent = new Intent(MediaStore.ACTION_IMAGE_CAPTURE);

|

||||

if (takePhotoIntent.resolveActivity(getPackageManager()) != null) {

|

||||

startActivityForResult(takePhotoIntent, TAKE_PHOTO_REQUEST_CODE);

|

||||

Intent takePictureIntent = new Intent(MediaStore.ACTION_IMAGE_CAPTURE);

|

||||

// Ensure that there's a camera activity to handle the intent

|

||||

if (takePictureIntent.resolveActivity(getPackageManager()) != null) {

|

||||

// Create the File where the photo should go

|

||||

File photoFile = null;

|

||||

try {

|

||||

photoFile = createImageFile();

|

||||

} catch (IOException ex) {

|

||||

Log.e("MainActitity", ex.getMessage(), ex);

|

||||

Toast.makeText(MainActivity.this,

|

||||

"Create Camera temp file failed: " + ex.getMessage(), Toast.LENGTH_SHORT).show();

|

||||

}

|

||||

// Continue only if the File was successfully created

|

||||

if (photoFile != null) {

|

||||

Log.i(TAG, "FILEPATH " + getExternalFilesDir("Pictures").getAbsolutePath());

|

||||

Uri photoURI = FileProvider.getUriForFile(this,

|

||||

"com.baidu.paddle.lite.demo.ocr.fileprovider",

|

||||

photoFile);

|

||||

currentPhotoPath = photoFile.getAbsolutePath();

|

||||

takePictureIntent.putExtra(MediaStore.EXTRA_OUTPUT, photoURI);

|

||||

startActivityForResult(takePictureIntent, TAKE_PHOTO_REQUEST_CODE);

|

||||

Log.i(TAG, "startActivityForResult finished");

|

||||

}

|

||||

}

|

||||

|

||||

}

|

||||

|

||||

private File createImageFile() throws IOException {

|

||||

// Create an image file name

|

||||

String timeStamp = new SimpleDateFormat("yyyyMMdd_HHmmss").format(new Date());

|

||||

String imageFileName = "JPEG_" + timeStamp + "_";

|

||||

File storageDir = getExternalFilesDir(Environment.DIRECTORY_PICTURES);

|

||||

File image = File.createTempFile(

|

||||

imageFileName, /* prefix */

|

||||

".bmp", /* suffix */

|

||||

storageDir /* directory */

|

||||

);

|

||||

|

||||

return image;

|

||||

}

|

||||

|

||||

@Override

|

||||

protected void onActivityResult(int requestCode, int resultCode, Intent data) {

|

||||

super.onActivityResult(requestCode, resultCode, data);

|

||||

if (resultCode == RESULT_OK && data != null) {

|

||||

if (resultCode == RESULT_OK) {

|

||||

switch (requestCode) {

|

||||

case OPEN_GALLERY_REQUEST_CODE:

|

||||

if (data == null) {

|

||||

break;

|

||||

}

|

||||

try {

|

||||

ContentResolver resolver = getContentResolver();

|

||||

Uri uri = data.getData();

|

||||

|

|

@ -393,9 +439,22 @@ public class MainActivity extends AppCompatActivity {

|

|||

}

|

||||

break;

|

||||

case TAKE_PHOTO_REQUEST_CODE:

|

||||

Bundle extras = data.getExtras();

|

||||

Bitmap image = (Bitmap) extras.get("data");

|

||||

if (currentPhotoPath != null) {

|

||||

ExifInterface exif = null;

|

||||

try {

|

||||

exif = new ExifInterface(currentPhotoPath);

|

||||

} catch (IOException e) {

|

||||

e.printStackTrace();

|

||||

}

|

||||

int orientation = exif.getAttributeInt(ExifInterface.TAG_ORIENTATION,

|

||||

ExifInterface.ORIENTATION_UNDEFINED);

|

||||

Log.i(TAG, "rotation " + orientation);

|

||||

Bitmap image = BitmapFactory.decodeFile(currentPhotoPath);

|

||||

image = Utils.rotateBitmap(image, orientation);

|

||||

onImageChanged(image);

|

||||

} else {

|

||||

Log.e(TAG, "currentPhotoPath is null");

|

||||

}

|

||||

break;

|

||||

default:

|

||||

break;

|

||||

|

|

|

|||

|

|

@ -0,0 +1,157 @@

|

|||

package com.baidu.paddle.lite.demo.ocr;

|

||||

|

||||

import android.graphics.Bitmap;

|

||||

import android.graphics.BitmapFactory;

|

||||

import android.os.Build;

|

||||

import android.os.Bundle;

|

||||

import android.os.Handler;

|

||||

import android.os.HandlerThread;

|

||||

import android.os.Message;

|

||||

import android.util.Log;

|

||||

import android.view.View;

|

||||

import android.widget.Button;

|

||||

import android.widget.ImageView;

|

||||

import android.widget.TextView;

|

||||

import android.widget.Toast;

|

||||

|

||||

import androidx.appcompat.app.AppCompatActivity;

|

||||

|

||||

import java.io.IOException;

|

||||

import java.io.InputStream;

|

||||

|

||||

public class MiniActivity extends AppCompatActivity {

|

||||

|

||||

|

||||

public static final int REQUEST_LOAD_MODEL = 0;

|

||||

public static final int REQUEST_RUN_MODEL = 1;

|

||||

public static final int REQUEST_UNLOAD_MODEL = 2;

|

||||

public static final int RESPONSE_LOAD_MODEL_SUCCESSED = 0;

|

||||

public static final int RESPONSE_LOAD_MODEL_FAILED = 1;

|

||||

public static final int RESPONSE_RUN_MODEL_SUCCESSED = 2;

|

||||

public static final int RESPONSE_RUN_MODEL_FAILED = 3;

|

||||

|

||||

private static final String TAG = "MiniActivity";

|

||||

|

||||

protected Handler receiver = null; // Receive messages from worker thread

|

||||

protected Handler sender = null; // Send command to worker thread

|

||||

protected HandlerThread worker = null; // Worker thread to load&run model

|

||||

protected volatile Predictor predictor = null;

|

||||

|

||||

private String assetModelDirPath = "models/ocr_v1_for_cpu";

|

||||

private String assetlabelFilePath = "labels/ppocr_keys_v1.txt";

|

||||

|

||||

private Button button;

|

||||

private ImageView imageView; // image result

|

||||

private TextView textView; // text result

|

||||

|

||||

@Override

|

||||

protected void onCreate(Bundle savedInstanceState) {

|

||||

super.onCreate(savedInstanceState);

|

||||

setContentView(R.layout.activity_mini);

|

||||

|

||||

Log.i(TAG, "SHOW in Logcat");

|

||||

|

||||

// Prepare the worker thread for mode loading and inference

|

||||

worker = new HandlerThread("Predictor Worker");

|

||||

worker.start();

|

||||

sender = new Handler(worker.getLooper()) {

|

||||

public void handleMessage(Message msg) {

|

||||

switch (msg.what) {

|

||||

case REQUEST_LOAD_MODEL:

|

||||

// Load model and reload test image

|

||||

if (!onLoadModel()) {

|

||||

runOnUiThread(new Runnable() {

|

||||

@Override

|

||||

public void run() {

|

||||

Toast.makeText(MiniActivity.this, "Load model failed!", Toast.LENGTH_SHORT).show();

|

||||

}

|

||||

});

|

||||

}

|

||||

break;

|

||||

case REQUEST_RUN_MODEL:

|

||||

// Run model if model is loaded

|

||||

final boolean isSuccessed = onRunModel();

|

||||

runOnUiThread(new Runnable() {

|

||||

@Override

|

||||

public void run() {

|

||||

if (isSuccessed){

|

||||

onRunModelSuccessed();

|

||||

}else{

|

||||

Toast.makeText(MiniActivity.this, "Run model failed!", Toast.LENGTH_SHORT).show();

|

||||

}

|

||||

}

|

||||

});

|

||||

break;

|

||||

}

|

||||

}

|

||||

};

|

||||

sender.sendEmptyMessage(REQUEST_LOAD_MODEL); // corresponding to REQUEST_LOAD_MODEL, to call onLoadModel()

|

||||

|

||||

imageView = findViewById(R.id.imageView);

|

||||

textView = findViewById(R.id.sample_text);

|

||||

button = findViewById(R.id.button);

|

||||

button.setOnClickListener(new View.OnClickListener() {

|

||||

@Override

|

||||

public void onClick(View v) {

|

||||

sender.sendEmptyMessage(REQUEST_RUN_MODEL);

|

||||

}

|

||||

});

|

||||

|

||||

|

||||

}

|

||||

|

||||

@Override

|

||||

protected void onDestroy() {

|

||||

onUnloadModel();

|

||||

if (Build.VERSION.SDK_INT >= Build.VERSION_CODES.JELLY_BEAN_MR2) {

|

||||

worker.quitSafely();

|

||||

} else {

|

||||

worker.quit();

|

||||

}

|

||||

super.onDestroy();

|

||||

}

|

||||

|

||||

/**

|

||||

* call in onCreate, model init

|

||||

*

|

||||

* @return

|

||||

*/

|

||||

private boolean onLoadModel() {

|

||||

if (predictor == null) {

|

||||

predictor = new Predictor();

|

||||

}

|

||||

return predictor.init(this, assetModelDirPath, assetlabelFilePath);

|

||||

}

|

||||

|

||||

/**

|

||||

* init engine

|

||||

* call in onCreate

|

||||

*

|

||||

* @return

|

||||

*/

|

||||

private boolean onRunModel() {

|

||||

try {

|

||||

String assetImagePath = "images/5.jpg";

|

||||

InputStream imageStream = getAssets().open(assetImagePath);

|

||||

Bitmap image = BitmapFactory.decodeStream(imageStream);

|

||||

// Input is Bitmap

|

||||

predictor.setInputImage(image);

|

||||

return predictor.isLoaded() && predictor.runModel();

|

||||

} catch (IOException e) {

|

||||

e.printStackTrace();

|

||||

return false;

|

||||

}

|

||||

}

|

||||

|

||||

private void onRunModelSuccessed() {

|

||||

Log.i(TAG, "onRunModelSuccessed");

|

||||

textView.setText(predictor.outputResult);

|

||||

imageView.setImageBitmap(predictor.outputImage);

|

||||

}

|

||||

|

||||

private void onUnloadModel() {

|

||||

if (predictor != null) {

|

||||

predictor.releaseModel();

|

||||

}

|

||||

}

|

||||

}

|

||||

|

|

@ -35,8 +35,8 @@ public class OCRPredictorNative {

|

|||

|

||||

}

|

||||

|

||||

public void release(){

|

||||

if (nativePointer != 0){

|

||||

public void release() {

|

||||

if (nativePointer != 0) {

|

||||

nativePointer = 0;

|

||||

destory(nativePointer);

|

||||

}

|

||||

|

|

|

|||

|

|

@ -38,7 +38,7 @@ public class Predictor {

|

|||

protected float scoreThreshold = 0.1f;

|

||||

protected Bitmap inputImage = null;

|

||||

protected Bitmap outputImage = null;

|

||||

protected String outputResult = "";

|

||||

protected volatile String outputResult = "";

|

||||

protected float preprocessTime = 0;

|

||||

protected float postprocessTime = 0;

|

||||

|

||||

|

|

@ -46,6 +46,16 @@ public class Predictor {

|

|||

public Predictor() {

|

||||

}

|

||||

|

||||

public boolean init(Context appCtx, String modelPath, String labelPath) {

|

||||

isLoaded = loadModel(appCtx, modelPath, cpuThreadNum, cpuPowerMode);

|

||||

if (!isLoaded) {

|

||||

return false;

|

||||

}

|

||||

isLoaded = loadLabel(appCtx, labelPath);

|

||||

return isLoaded;

|

||||

}

|

||||

|

||||

|

||||

public boolean init(Context appCtx, String modelPath, String labelPath, int cpuThreadNum, String cpuPowerMode,

|

||||

String inputColorFormat,

|

||||

long[] inputShape, float[] inputMean,

|

||||

|

|

@ -76,11 +86,7 @@ public class Predictor {

|

|||

Log.e(TAG, "Only BGR color format is supported.");

|

||||

return false;

|

||||

}

|

||||

isLoaded = loadModel(appCtx, modelPath, cpuThreadNum, cpuPowerMode);

|

||||

if (!isLoaded) {

|

||||

return false;

|

||||

}

|

||||

isLoaded = loadLabel(appCtx, labelPath);

|

||||

boolean isLoaded = init(appCtx, modelPath, labelPath);

|

||||

if (!isLoaded) {

|

||||

return false;

|

||||

}

|

||||

|

|

@ -127,12 +133,12 @@ public class Predictor {

|

|||

}

|

||||

|

||||

public void releaseModel() {

|

||||

if (paddlePredictor != null){

|

||||

if (paddlePredictor != null) {

|

||||

paddlePredictor.release();

|

||||

paddlePredictor = null;

|

||||

}

|

||||

isLoaded = false;

|

||||

cpuThreadNum = 4;

|

||||

cpuThreadNum = 1;

|

||||

cpuPowerMode = "LITE_POWER_HIGH";

|

||||

modelPath = "";

|

||||

modelName = "";

|

||||

|

|

@ -222,7 +228,7 @@ public class Predictor {

|

|||

for (int i = 0; i < warmupIterNum; i++) {

|

||||

paddlePredictor.runImage(inputData, width, height, channels, inputImage);

|

||||

}

|

||||

warmupIterNum = 0; // 之后不要再warm了

|

||||

warmupIterNum = 0; // do not need warm

|

||||

// Run inference

|

||||

start = new Date();

|

||||

ArrayList<OcrResultModel> results = paddlePredictor.runImage(inputData, width, height, channels, inputImage);

|

||||

|

|

@ -287,9 +293,7 @@ public class Predictor {

|

|||

if (image == null) {

|

||||

return;

|

||||

}

|

||||

// Scale image to the size of input tensor

|

||||

Bitmap rgbaImage = image.copy(Bitmap.Config.ARGB_8888, true);

|

||||

this.inputImage = rgbaImage;

|

||||

this.inputImage = image.copy(Bitmap.Config.ARGB_8888, true);

|

||||

}

|

||||

|

||||

private ArrayList<OcrResultModel> postprocess(ArrayList<OcrResultModel> results) {

|

||||

|

|

@ -310,7 +314,7 @@ public class Predictor {

|

|||

|

||||

private void drawResults(ArrayList<OcrResultModel> results) {

|

||||

StringBuffer outputResultSb = new StringBuffer("");

|

||||

for (int i=0;i<results.size();i++) {

|

||||

for (int i = 0; i < results.size(); i++) {

|

||||

OcrResultModel result = results.get(i);

|

||||

StringBuilder sb = new StringBuilder("");

|

||||

sb.append(result.getLabel());

|

||||

|

|

@ -319,8 +323,8 @@ public class Predictor {

|

|||

for (Point p : result.getPoints()) {

|

||||

sb.append("(").append(p.x).append(",").append(p.y).append(") ");

|

||||

}

|

||||

Log.i(TAG, sb.toString());

|

||||

outputResultSb.append(i+1).append(": ").append(result.getLabel()).append("\n");

|

||||

Log.i(TAG, sb.toString()); // show LOG in Logcat panel

|

||||

outputResultSb.append(i + 1).append(": ").append(result.getLabel()).append("\n");

|

||||

}

|

||||

outputResult = outputResultSb.toString();

|

||||

outputImage = inputImage;

|

||||

|

|

|

|||

|

|

@ -5,7 +5,8 @@ import android.os.Bundle;

|

|||

import android.preference.CheckBoxPreference;

|

||||

import android.preference.EditTextPreference;

|

||||

import android.preference.ListPreference;

|

||||

import android.support.v7.app.ActionBar;

|

||||

|

||||

import androidx.appcompat.app.ActionBar;

|

||||

|

||||

import java.util.ArrayList;

|

||||

import java.util.List;

|

||||

|

|

|

|||

|

|

@ -2,6 +2,8 @@ package com.baidu.paddle.lite.demo.ocr;

|

|||

|

||||

import android.content.Context;

|

||||

import android.graphics.Bitmap;

|

||||

import android.graphics.Matrix;

|

||||

import android.media.ExifInterface;

|

||||

import android.os.Environment;

|

||||

|

||||

import java.io.*;

|

||||

|

|

@ -110,4 +112,48 @@ public class Utils {

|

|||

}

|

||||

return Bitmap.createScaledBitmap(bitmap, newWidth, newHeight, true);

|

||||

}

|

||||

|

||||

public static Bitmap rotateBitmap(Bitmap bitmap, int orientation) {

|

||||

|

||||

Matrix matrix = new Matrix();

|

||||

switch (orientation) {

|

||||

case ExifInterface.ORIENTATION_NORMAL:

|

||||

return bitmap;

|

||||

case ExifInterface.ORIENTATION_FLIP_HORIZONTAL:

|

||||

matrix.setScale(-1, 1);

|

||||

break;

|

||||

case ExifInterface.ORIENTATION_ROTATE_180:

|

||||

matrix.setRotate(180);

|

||||

break;

|

||||

case ExifInterface.ORIENTATION_FLIP_VERTICAL:

|

||||

matrix.setRotate(180);

|

||||

matrix.postScale(-1, 1);

|

||||

break;

|

||||

case ExifInterface.ORIENTATION_TRANSPOSE:

|

||||

matrix.setRotate(90);

|

||||

matrix.postScale(-1, 1);

|

||||

break;

|

||||

case ExifInterface.ORIENTATION_ROTATE_90:

|

||||

matrix.setRotate(90);

|

||||

break;

|

||||

case ExifInterface.ORIENTATION_TRANSVERSE:

|

||||

matrix.setRotate(-90);

|

||||

matrix.postScale(-1, 1);

|

||||

break;

|

||||

case ExifInterface.ORIENTATION_ROTATE_270:

|

||||

matrix.setRotate(-90);

|

||||

break;

|

||||

default:

|

||||

return bitmap;

|

||||

}

|

||||

try {

|

||||

Bitmap bmRotated = Bitmap.createBitmap(bitmap, 0, 0, bitmap.getWidth(), bitmap.getHeight(), matrix, true);

|

||||

bitmap.recycle();

|

||||

return bmRotated;

|

||||

}

|

||||

catch (OutOfMemoryError e) {

|

||||

e.printStackTrace();

|

||||

return null;

|

||||

}

|

||||

}

|

||||

}

|

||||

|

|

|

|||

|

|

@ -1,5 +1,5 @@

|

|||

<?xml version="1.0" encoding="utf-8"?>

|

||||

<android.support.constraint.ConstraintLayout xmlns:android="http://schemas.android.com/apk/res/android"

|

||||

<androidx.constraintlayout.widget.ConstraintLayout xmlns:android="http://schemas.android.com/apk/res/android"

|

||||

xmlns:app="http://schemas.android.com/apk/res-auto"

|

||||

xmlns:tools="http://schemas.android.com/tools"

|

||||

android:layout_width="match_parent"

|

||||

|

|

@ -96,4 +96,4 @@

|

|||

|

||||

</RelativeLayout>

|

||||

|

||||

</android.support.constraint.ConstraintLayout>

|

||||

</androidx.constraintlayout.widget.ConstraintLayout>

|

||||

|

|

@ -0,0 +1,46 @@

|

|||

<?xml version="1.0" encoding="utf-8"?>

|

||||

<!-- for MiniActivity Use Only -->

|

||||

<androidx.constraintlayout.widget.ConstraintLayout xmlns:android="http://schemas.android.com/apk/res/android"

|

||||

xmlns:app="http://schemas.android.com/apk/res-auto"

|

||||

xmlns:tools="http://schemas.android.com/tools"

|

||||

android:layout_width="match_parent"

|

||||

android:layout_height="match_parent"

|

||||

app:layout_constraintLeft_toLeftOf="parent"

|

||||

app:layout_constraintLeft_toRightOf="parent"

|

||||

tools:context=".MainActivity">

|

||||

|

||||

<TextView

|

||||

android:id="@+id/sample_text"

|

||||

android:layout_width="0dp"

|

||||

android:layout_height="wrap_content"

|

||||

android:text="Hello World!"

|

||||

app:layout_constraintLeft_toLeftOf="parent"

|

||||

app:layout_constraintRight_toRightOf="parent"

|

||||

app:layout_constraintTop_toBottomOf="@id/imageView"

|

||||

android:scrollbars="vertical"

|

||||

/>

|

||||

|

||||

<ImageView

|

||||

android:id="@+id/imageView"

|

||||

android:layout_width="wrap_content"

|

||||

android:layout_height="wrap_content"

|

||||

android:paddingTop="20dp"

|

||||

android:paddingBottom="20dp"

|

||||

app:layout_constraintBottom_toTopOf="@id/imageView"

|

||||

app:layout_constraintLeft_toLeftOf="parent"

|

||||

app:layout_constraintRight_toRightOf="parent"

|

||||

app:layout_constraintTop_toTopOf="parent"

|

||||

tools:srcCompat="@tools:sample/avatars" />

|

||||

|

||||

<Button

|

||||

android:id="@+id/button"

|

||||

android:layout_width="wrap_content"

|

||||

android:layout_height="wrap_content"

|

||||

android:layout_marginBottom="4dp"

|

||||

android:text="Button"

|

||||

app:layout_constraintBottom_toBottomOf="parent"

|

||||

app:layout_constraintLeft_toLeftOf="parent"

|

||||

app:layout_constraintRight_toRightOf="parent"

|

||||

tools:layout_editor_absoluteX="161dp" />

|

||||

|

||||

</androidx.constraintlayout.widget.ConstraintLayout>

|

||||

|

|

@ -0,0 +1,4 @@

|

|||

<?xml version="1.0" encoding="utf-8"?>

|

||||

<paths xmlns:android="http://schemas.android.com/apk/res/android">

|

||||

<external-files-path name="my_images" path="Pictures" />

|

||||

</paths>

|

||||

|

|

@ -1,8 +1,17 @@

|

|||

project(ocr_system CXX C)

|

||||

|

||||

option(WITH_MKL "Compile demo with MKL/OpenBlas support, default use MKL." ON)

|

||||

option(WITH_GPU "Compile demo with GPU/CPU, default use CPU." OFF)

|

||||

option(WITH_STATIC_LIB "Compile demo with static/shared library, default use static." ON)

|

||||

option(USE_TENSORRT "Compile demo with TensorRT." OFF)

|

||||

option(WITH_TENSORRT "Compile demo with TensorRT." OFF)

|

||||

|

||||

SET(PADDLE_LIB "" CACHE PATH "Location of libraries")

|

||||

SET(OPENCV_DIR "" CACHE PATH "Location of libraries")

|

||||

SET(CUDA_LIB "" CACHE PATH "Location of libraries")

|

||||

SET(CUDNN_LIB "" CACHE PATH "Location of libraries")

|

||||

SET(TENSORRT_DIR "" CACHE PATH "Compile demo with TensorRT")

|

||||

|

||||

set(DEMO_NAME "ocr_system")

|

||||

|

||||

|

||||

macro(safe_set_static_flag)

|

||||

|

|

@ -15,24 +24,60 @@ macro(safe_set_static_flag)

|

|||

endforeach(flag_var)

|

||||

endmacro()

|

||||

|

||||

set(CMAKE_CXX_FLAGS "${CMAKE_CXX_FLAGS} -std=c++11 -g -fpermissive")

|

||||

set(CMAKE_STATIC_LIBRARY_PREFIX "")

|

||||

message("flags" ${CMAKE_CXX_FLAGS})

|

||||

set(CMAKE_CXX_FLAGS_RELEASE "-O3")

|

||||

if (WITH_MKL)

|

||||

ADD_DEFINITIONS(-DUSE_MKL)

|

||||

endif()

|

||||

|

||||

if(NOT DEFINED PADDLE_LIB)

|

||||

message(FATAL_ERROR "please set PADDLE_LIB with -DPADDLE_LIB=/path/paddle/lib")

|

||||

endif()

|

||||

if(NOT DEFINED DEMO_NAME)

|

||||

message(FATAL_ERROR "please set DEMO_NAME with -DDEMO_NAME=demo_name")

|

||||

|

||||

if(NOT DEFINED OPENCV_DIR)

|

||||

message(FATAL_ERROR "please set OPENCV_DIR with -DOPENCV_DIR=/path/opencv")

|

||||

endif()

|

||||

|

||||

|

||||

set(OPENCV_DIR ${OPENCV_DIR})

|

||||

find_package(OpenCV REQUIRED PATHS ${OPENCV_DIR}/share/OpenCV NO_DEFAULT_PATH)

|

||||

if (WIN32)

|

||||

include_directories("${PADDLE_LIB}/paddle/fluid/inference")

|

||||

include_directories("${PADDLE_LIB}/paddle/include")

|

||||

link_directories("${PADDLE_LIB}/paddle/fluid/inference")

|

||||

find_package(OpenCV REQUIRED PATHS ${OPENCV_DIR}/build/ NO_DEFAULT_PATH)

|

||||

|

||||

else ()

|

||||

find_package(OpenCV REQUIRED PATHS ${OPENCV_DIR}/share/OpenCV NO_DEFAULT_PATH)

|

||||

include_directories("${PADDLE_LIB}/paddle/include")

|

||||

link_directories("${PADDLE_LIB}/paddle/lib")

|

||||

endif ()

|

||||

include_directories(${OpenCV_INCLUDE_DIRS})

|

||||

|

||||

include_directories("${PADDLE_LIB}/paddle/include")

|

||||

if (WIN32)

|

||||

add_definitions("/DGOOGLE_GLOG_DLL_DECL=")

|

||||

set(CMAKE_C_FLAGS_DEBUG "${CMAKE_C_FLAGS_DEBUG} /bigobj /MTd")

|

||||

set(CMAKE_C_FLAGS_RELEASE "${CMAKE_C_FLAGS_RELEASE} /bigobj /MT")

|

||||

set(CMAKE_CXX_FLAGS_DEBUG "${CMAKE_CXX_FLAGS_DEBUG} /bigobj /MTd")

|

||||

set(CMAKE_CXX_FLAGS_RELEASE "${CMAKE_CXX_FLAGS_RELEASE} /bigobj /MT")

|

||||

if (WITH_STATIC_LIB)

|

||||

safe_set_static_flag()

|

||||

add_definitions(-DSTATIC_LIB)

|

||||

endif()

|

||||

else()

|

||||

set(CMAKE_CXX_FLAGS "${CMAKE_CXX_FLAGS} -g -o3 -std=c++11")

|

||||

set(CMAKE_STATIC_LIBRARY_PREFIX "")

|

||||

endif()

|

||||

message("flags" ${CMAKE_CXX_FLAGS})

|

||||

|

||||

|

||||

if (WITH_GPU)

|

||||

if (NOT DEFINED CUDA_LIB OR ${CUDA_LIB} STREQUAL "")

|

||||

message(FATAL_ERROR "please set CUDA_LIB with -DCUDA_LIB=/path/cuda-8.0/lib64")

|

||||

endif()

|

||||

if (NOT WIN32)

|

||||

if (NOT DEFINED CUDNN_LIB)

|

||||

message(FATAL_ERROR "please set CUDNN_LIB with -DCUDNN_LIB=/path/cudnn_v7.4/cuda/lib64")

|

||||

endif()

|

||||

endif(NOT WIN32)

|

||||

endif()

|

||||

|

||||

include_directories("${PADDLE_LIB}/third_party/install/protobuf/include")

|

||||

include_directories("${PADDLE_LIB}/third_party/install/glog/include")

|

||||

include_directories("${PADDLE_LIB}/third_party/install/gflags/include")

|

||||

|

|

@ -43,10 +88,12 @@ include_directories("${PADDLE_LIB}/third_party/eigen3")

|

|||

|

||||

include_directories("${CMAKE_SOURCE_DIR}/")

|

||||

|

||||

if (USE_TENSORRT AND WITH_GPU)

|

||||

include_directories("${TENSORRT_ROOT}/include")

|

||||

link_directories("${TENSORRT_ROOT}/lib")

|

||||

endif()

|

||||

if (NOT WIN32)

|

||||

if (WITH_TENSORRT AND WITH_GPU)

|

||||

include_directories("${TENSORRT_DIR}/include")

|

||||

link_directories("${TENSORRT_DIR}/lib")

|

||||

endif()

|

||||

endif(NOT WIN32)

|

||||

|

||||

link_directories("${PADDLE_LIB}/third_party/install/zlib/lib")

|

||||

|

||||

|

|

@ -57,17 +104,24 @@ link_directories("${PADDLE_LIB}/third_party/install/xxhash/lib")

|

|||

link_directories("${PADDLE_LIB}/paddle/lib")

|

||||

|

||||

|

||||

AUX_SOURCE_DIRECTORY(./src SRCS)

|

||||

add_executable(${DEMO_NAME} ${SRCS})

|

||||

|

||||

if(WITH_MKL)

|

||||

include_directories("${PADDLE_LIB}/third_party/install/mklml/include")

|

||||

if (WIN32)

|

||||

set(MATH_LIB ${PADDLE_LIB}/third_party/install/mklml/lib/mklml.lib

|

||||

${PADDLE_LIB}/third_party/install/mklml/lib/libiomp5md.lib)

|

||||

else ()

|

||||

set(MATH_LIB ${PADDLE_LIB}/third_party/install/mklml/lib/libmklml_intel${CMAKE_SHARED_LIBRARY_SUFFIX}

|

||||

${PADDLE_LIB}/third_party/install/mklml/lib/libiomp5${CMAKE_SHARED_LIBRARY_SUFFIX})

|

||||

execute_process(COMMAND cp -r ${PADDLE_LIB}/third_party/install/mklml/lib/libmklml_intel${CMAKE_SHARED_LIBRARY_SUFFIX} /usr/lib)

|

||||

endif ()

|

||||

set(MKLDNN_PATH "${PADDLE_LIB}/third_party/install/mkldnn")

|

||||

if(EXISTS ${MKLDNN_PATH})

|

||||

include_directories("${MKLDNN_PATH}/include")

|

||||

if (WIN32)

|

||||

set(MKLDNN_LIB ${MKLDNN_PATH}/lib/mkldnn.lib)

|

||||

else ()

|

||||

set(MKLDNN_LIB ${MKLDNN_PATH}/lib/libmkldnn.so.0)

|

||||

endif ()

|

||||

endif()

|

||||

else()

|

||||

set(MATH_LIB ${PADDLE_LIB}/third_party/install/openblas/lib/libopenblas${CMAKE_STATIC_LIBRARY_SUFFIX})

|

||||

|

|

@ -82,24 +136,66 @@ else()

|

|||

${PADDLE_LIB}/paddle/lib/libpaddle_fluid${CMAKE_SHARED_LIBRARY_SUFFIX})

|

||||

endif()

|

||||

|

||||

set(EXTERNAL_LIB "-lrt -ldl -lpthread -lm")

|

||||

|

||||

set(DEPS ${DEPS}

|

||||

if (NOT WIN32)

|

||||

set(DEPS ${DEPS}

|

||||

${MATH_LIB} ${MKLDNN_LIB}

|

||||

glog gflags protobuf z xxhash

|

||||

${EXTERNAL_LIB} ${OpenCV_LIBS})

|

||||

)

|

||||

if(EXISTS "${PADDLE_LIB}/third_party/install/snappystream/lib")

|

||||

set(DEPS ${DEPS} snappystream)

|

||||

endif()

|

||||

if (EXISTS "${PADDLE_LIB}/third_party/install/snappy/lib")

|

||||

set(DEPS ${DEPS} snappy)

|

||||

endif()

|

||||

else()

|

||||

set(DEPS ${DEPS}

|

||||

${MATH_LIB} ${MKLDNN_LIB}

|

||||

glog gflags_static libprotobuf xxhash)

|

||||

set(DEPS ${DEPS} libcmt shlwapi)

|

||||

if (EXISTS "${PADDLE_LIB}/third_party/install/snappy/lib")

|

||||

set(DEPS ${DEPS} snappy)

|

||||

endif()

|

||||

if(EXISTS "${PADDLE_LIB}/third_party/install/snappystream/lib")

|

||||

set(DEPS ${DEPS} snappystream)

|

||||

endif()

|

||||

endif(NOT WIN32)

|

||||

|

||||

|

||||

if(WITH_GPU)

|

||||

if (USE_TENSORRT)

|

||||

set(DEPS ${DEPS}

|

||||

${TENSORRT_ROOT}/lib/libnvinfer${CMAKE_SHARED_LIBRARY_SUFFIX})

|

||||

set(DEPS ${DEPS}

|

||||

${TENSORRT_ROOT}/lib/libnvinfer_plugin${CMAKE_SHARED_LIBRARY_SUFFIX})

|

||||

if(NOT WIN32)

|

||||

if (WITH_TENSORRT)

|

||||

set(DEPS ${DEPS} ${TENSORRT_DIR}/lib/libnvinfer${CMAKE_SHARED_LIBRARY_SUFFIX})

|

||||

set(DEPS ${DEPS} ${TENSORRT_DIR}/lib/libnvinfer_plugin${CMAKE_SHARED_LIBRARY_SUFFIX})

|

||||

endif()

|

||||

set(DEPS ${DEPS} ${CUDA_LIB}/libcudart${CMAKE_SHARED_LIBRARY_SUFFIX})

|

||||

set(DEPS ${DEPS} ${CUDA_LIB}/libcudart${CMAKE_SHARED_LIBRARY_SUFFIX} )

|

||||

set(DEPS ${DEPS} ${CUDA_LIB}/libcublas${CMAKE_SHARED_LIBRARY_SUFFIX} )

|

||||

set(DEPS ${DEPS} ${CUDNN_LIB}/libcudnn${CMAKE_SHARED_LIBRARY_SUFFIX} )

|

||||

set(DEPS ${DEPS} ${CUDNN_LIB}/libcudnn${CMAKE_SHARED_LIBRARY_SUFFIX})

|

||||

else()

|

||||

set(DEPS ${DEPS} ${CUDA_LIB}/cudart${CMAKE_STATIC_LIBRARY_SUFFIX} )

|

||||

set(DEPS ${DEPS} ${CUDA_LIB}/cublas${CMAKE_STATIC_LIBRARY_SUFFIX} )

|

||||

set(DEPS ${DEPS} ${CUDNN_LIB}/cudnn${CMAKE_STATIC_LIBRARY_SUFFIX})

|

||||

endif()

|

||||

endif()

|

||||

|

||||

|

||||

if (NOT WIN32)

|

||||

set(EXTERNAL_LIB "-ldl -lrt -lgomp -lz -lm -lpthread")

|

||||

set(DEPS ${DEPS} ${EXTERNAL_LIB})

|

||||

endif()

|

||||

|

||||

set(DEPS ${DEPS} ${OpenCV_LIBS})

|

||||

|

||||

AUX_SOURCE_DIRECTORY(./src SRCS)

|

||||

add_executable(${DEMO_NAME} ${SRCS})

|

||||

|

||||

target_link_libraries(${DEMO_NAME} ${DEPS})

|

||||

|

||||

if (WIN32 AND WITH_MKL)

|

||||

add_custom_command(TARGET ${DEMO_NAME} POST_BUILD

|

||||

COMMAND ${CMAKE_COMMAND} -E copy_if_different ${PADDLE_LIB}/third_party/install/mklml/lib/mklml.dll ./mklml.dll

|

||||

COMMAND ${CMAKE_COMMAND} -E copy_if_different ${PADDLE_LIB}/third_party/install/mklml/lib/libiomp5md.dll ./libiomp5md.dll

|

||||

COMMAND ${CMAKE_COMMAND} -E copy_if_different ${PADDLE_LIB}/third_party/install/mkldnn/lib/mkldnn.dll ./mkldnn.dll

|

||||

COMMAND ${CMAKE_COMMAND} -E copy_if_different ${PADDLE_LIB}/third_party/install/mklml/lib/mklml.dll ./release/mklml.dll

|

||||

COMMAND ${CMAKE_COMMAND} -E copy_if_different ${PADDLE_LIB}/third_party/install/mklml/lib/libiomp5md.dll ./release/libiomp5md.dll

|

||||

COMMAND ${CMAKE_COMMAND} -E copy_if_different ${PADDLE_LIB}/third_party/install/mkldnn/lib/mkldnn.dll ./release/mkldnn.dll

|

||||

)

|

||||

endif()

|

||||

|

|

@ -0,0 +1,95 @@

|

|||

# Visual Studio 2019 Community CMake 编译指南

|

||||

|

||||

PaddleOCR在Windows 平台下基于`Visual Studio 2019 Community` 进行了测试。微软从`Visual Studio 2017`开始即支持直接管理`CMake`跨平台编译项目,但是直到`2019`才提供了稳定和完全的支持,所以如果你想使用CMake管理项目编译构建,我们推荐你使用`Visual Studio 2019`环境下构建。

|

||||

|

||||

|

||||

## 前置条件

|

||||

* Visual Studio 2019

|

||||

* CUDA 9.0 / CUDA 10.0,cudnn 7+ (仅在使用GPU版本的预测库时需要)

|

||||

* CMake 3.0+

|

||||

|

||||

请确保系统已经安装好上述基本软件,我们使用的是`VS2019`的社区版。

|

||||

|

||||

**下面所有示例以工作目录为 `D:\projects`演示**。

|

||||

|

||||

### Step1: 下载PaddlePaddle C++ 预测库 fluid_inference

|

||||

|

||||

PaddlePaddle C++ 预测库针对不同的`CPU`和`CUDA`版本提供了不同的预编译版本,请根据实际情况下载: [C++预测库下载列表](https://www.paddlepaddle.org.cn/documentation/docs/zh/develop/advanced_guide/inference_deployment/inference/windows_cpp_inference.html)

|

||||

|

||||

解压后`D:\projects\fluid_inference`目录包含内容为:

|

||||

```

|

||||

fluid_inference

|

||||

├── paddle # paddle核心库和头文件

|

||||

|

|

||||

├── third_party # 第三方依赖库和头文件

|

||||

|

|

||||

└── version.txt # 版本和编译信息

|

||||

```

|

||||

|

||||

### Step2: 安装配置OpenCV

|

||||

|

||||

1. 在OpenCV官网下载适用于Windows平台的3.4.6版本, [下载地址](https://sourceforge.net/projects/opencvlibrary/files/3.4.6/opencv-3.4.6-vc14_vc15.exe/download)

|

||||

2. 运行下载的可执行文件,将OpenCV解压至指定目录,如`D:\projects\opencv`

|

||||

3. 配置环境变量,如下流程所示

|

||||

- 我的电脑->属性->高级系统设置->环境变量

|

||||

- 在系统变量中找到Path(如没有,自行创建),并双击编辑

|

||||

- 新建,将opencv路径填入并保存,如`D:\projects\opencv\build\x64\vc14\bin`

|

||||

|

||||

### Step3: 使用Visual Studio 2019直接编译CMake

|

||||

|

||||

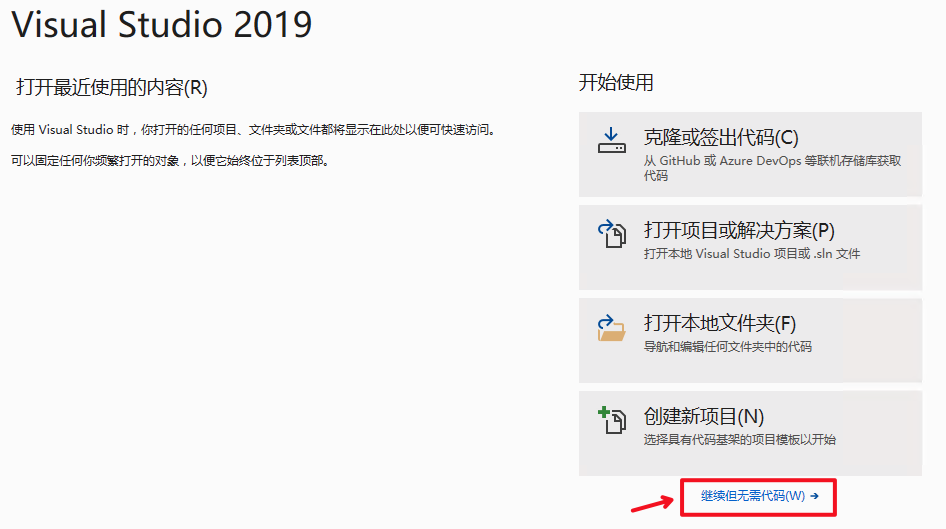

1. 打开Visual Studio 2019 Community,点击`继续但无需代码`

|

||||

|

||||

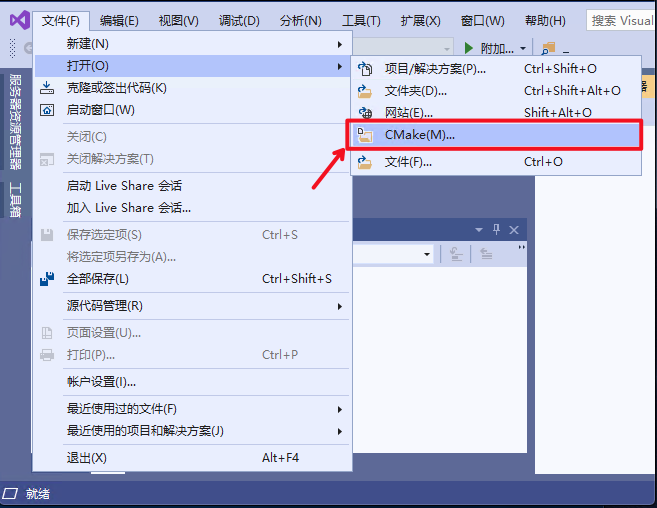

2. 点击: `文件`->`打开`->`CMake`

|

||||

|

||||

|

||||

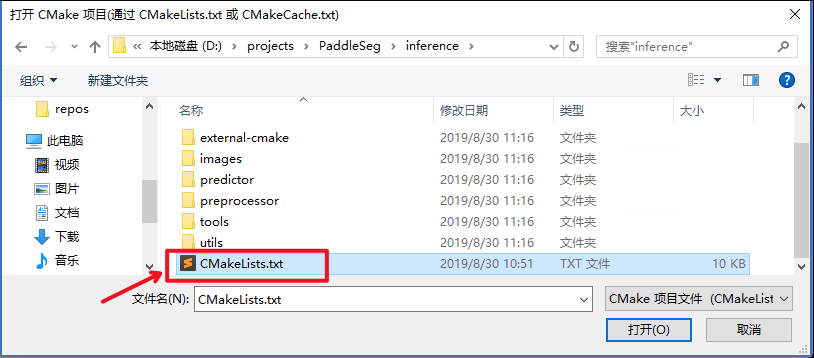

选择项目代码所在路径,并打开`CMakeList.txt`:

|

||||

|

||||

|

||||

|

||||

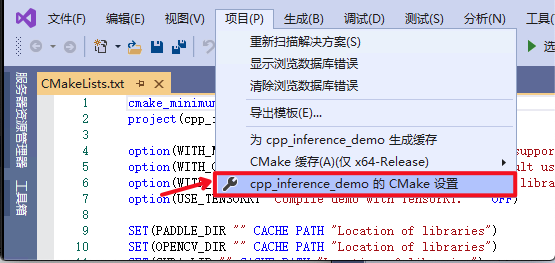

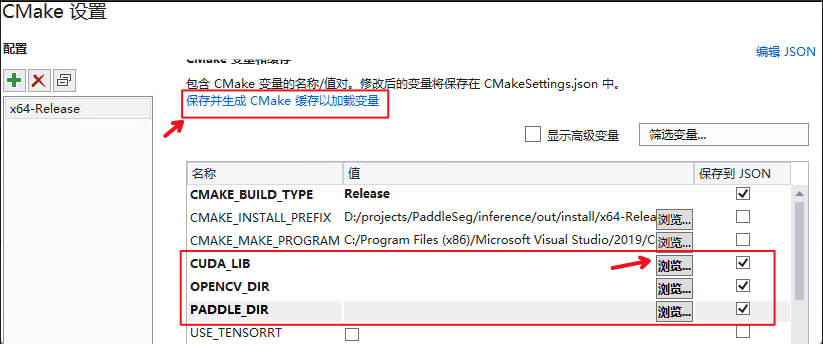

3. 点击:`项目`->`cpp_inference_demo的CMake设置`

|

||||

|

||||

|

||||

|

||||

4. 点击`浏览`,分别设置编译选项指定`CUDA`、`CUDNN_LIB`、`OpenCV`、`Paddle预测库`的路径

|

||||

|

||||

三个编译参数的含义说明如下(带`*`表示仅在使用**GPU版本**预测库时指定, 其中CUDA库版本尽量对齐,**使用9.0、10.0版本,不使用9.2、10.1等版本CUDA库**):

|

||||

|

||||

| 参数名 | 含义 |

|

||||

| ---- | ---- |

|

||||

| *CUDA_LIB | CUDA的库路径 |

|

||||

| *CUDNN_LIB | CUDNN的库路径 |

|

||||

| OPENCV_DIR | OpenCV的安装路径 |

|

||||

| PADDLE_LIB | Paddle预测库的路径 |

|

||||

|

||||

**注意:**

|

||||

1. 使用`CPU`版预测库,请把`WITH_GPU`的勾去掉

|

||||

2. 如果使用的是`openblas`版本,请把`WITH_MKL`勾去掉

|

||||

|

||||

|

||||

|

||||

**设置完成后**, 点击上图中`保存并生成CMake缓存以加载变量`。

|

||||

|

||||

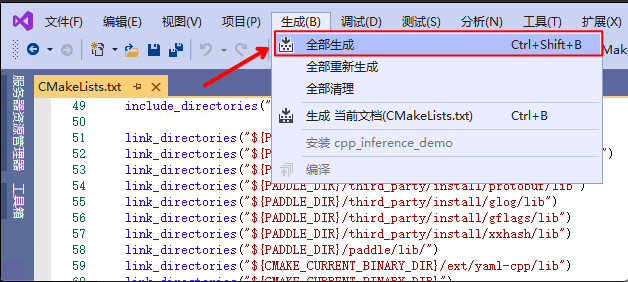

5. 点击`生成`->`全部生成`

|

||||

|

||||

|

||||

|

||||

|

||||

### Step4: 预测及可视化

|

||||

|

||||

上述`Visual Studio 2019`编译产出的可执行文件在`out\build\x64-Release`目录下,打开`cmd`,并切换到该目录:

|

||||

|

||||

```

|

||||

cd D:\projects\PaddleOCR\deploy\cpp_infer\out\build\x64-Release

|

||||

```

|

||||

可执行文件`ocr_system.exe`即为样例的预测程序,其主要使用方法如下

|

||||

|

||||

```shell

|

||||

#预测图片 `D:\projects\PaddleOCR\doc\imgs\10.jpg`

|

||||

.\ocr_system.exe D:\projects\PaddleOCR\deploy\cpp_infer\tools\config.txt D:\projects\PaddleOCR\doc\imgs\10.jpg

|

||||

```

|

||||

|

||||

第一个参数为配置文件路径,第二个参数为需要预测的图片路径。

|

||||

|

||||

|

||||

### 注意

|

||||

* 在Windows下的终端中执行文件exe时,可能会发生乱码的现象,此时需要在终端中输入`CHCP 65001`,将终端的编码方式由GBK编码(默认)改为UTF-8编码,更加具体的解释可以参考这篇博客:[https://blog.csdn.net/qq_35038153/article/details/78430359](https://blog.csdn.net/qq_35038153/article/details/78430359)。

|

||||

|

|

@ -7,6 +7,9 @@

|

|||

|

||||

### 运行准备

|

||||

- Linux环境,推荐使用docker。

|

||||

- Windows环境,目前支持基于`Visual Studio 2019 Community`进行编译。

|

||||

|

||||

* 该文档主要介绍基于Linux环境的PaddleOCR C++预测流程,如果需要在Windows下基于预测库进行C++预测,具体编译方法请参考[Windows下编译教程](./docs/windows_vs2019_build.md)

|

||||

|

||||

### 1.1 编译opencv库

|

||||

|

||||

|

|

|

|||

|

|

@ -44,7 +44,7 @@ Config::LoadConfig(const std::string &config_path) {

|

|||

std::map<std::string, std::string> dict;

|

||||

for (int i = 0; i < config.size(); i++) {

|

||||

// pass for empty line or comment

|

||||

if (config[i].size() <= 1 or config[i][0] == '#') {

|

||||

if (config[i].size() <= 1 || config[i][0] == '#') {

|

||||

continue;

|

||||

}

|

||||

std::vector<std::string> res = split(config[i], " ");

|

||||

|

|

|

|||

|

|

@ -39,22 +39,21 @@ std::vector<std::string> Utility::ReadDict(const std::string &path) {

|

|||

void Utility::VisualizeBboxes(

|

||||

const cv::Mat &srcimg,

|

||||

const std::vector<std::vector<std::vector<int>>> &boxes) {

|

||||

cv::Point rook_points[boxes.size()][4];

|

||||

for (int n = 0; n < boxes.size(); n++) {

|

||||

for (int m = 0; m < boxes[0].size(); m++) {

|

||||

rook_points[n][m] = cv::Point(int(boxes[n][m][0]), int(boxes[n][m][1]));

|

||||

}

|

||||

}

|

||||

cv::Mat img_vis;

|

||||

srcimg.copyTo(img_vis);

|

||||

for (int n = 0; n < boxes.size(); n++) {

|

||||

const cv::Point *ppt[1] = {rook_points[n]};

|

||||

cv::Point rook_points[4];

|

||||

for (int m = 0; m < boxes[n].size(); m++) {

|

||||

rook_points[m] = cv::Point(int(boxes[n][m][0]), int(boxes[n][m][1]));

|

||||

}

|

||||

|

||||

const cv::Point *ppt[1] = {rook_points};

|

||||

int npt[] = {4};

|

||||

cv::polylines(img_vis, ppt, npt, 1, 1, CV_RGB(0, 255, 0), 2, 8, 0);

|

||||

}

|

||||

|

||||

cv::imwrite("./ocr_vis.png", img_vis);

|

||||

std::cout << "The detection visualized image saved in ./ocr_vis.png.pn"

|

||||

std::cout << "The detection visualized image saved in ./ocr_vis.png"

|

||||

<< std::endl;

|

||||

}

|

||||

|

||||

|

|

|

|||

|

|

@ -1,8 +1,7 @@

|

|||

|

||||

OPENCV_DIR=your_opencv_dir

|

||||

LIB_DIR=your_paddle_inference_dir

|

||||

CUDA_LIB_DIR=your_cuda_lib_dir

|

||||

CUDNN_LIB_DIR=/your_cudnn_lib_dir

|

||||

CUDNN_LIB_DIR=your_cudnn_lib_dir

|

||||

|

||||

BUILD_DIR=build

|

||||

rm -rf ${BUILD_DIR}

|

||||

|

|

@ -11,7 +10,6 @@ cd ${BUILD_DIR}

|

|||

cmake .. \

|

||||

-DPADDLE_LIB=${LIB_DIR} \

|

||||

-DWITH_MKL=ON \

|

||||

-DDEMO_NAME=ocr_system \

|

||||

-DWITH_GPU=OFF \

|

||||

-DWITH_STATIC_LIB=OFF \

|

||||

-DUSE_TENSORRT=OFF \

|

||||

|

|

|

|||

|

|

@ -15,8 +15,7 @@ det_model_dir ./inference/det_db

|

|||

# rec config

|

||||

rec_model_dir ./inference/rec_crnn

|

||||

char_list_file ../../ppocr/utils/ppocr_keys_v1.txt

|

||||

img_path ../../doc/imgs/11.jpg

|

||||

|

||||

# show the detection results

|

||||

visualize 0

|

||||

visualize 1

|

||||

|

||||

|

|

|

|||

|

|

@ -18,7 +18,7 @@ Paddle Lite是飞桨轻量化推理引擎,为手机、IOT端提供高效推理

|

|||

1. [Docker](https://paddle-lite.readthedocs.io/zh/latest/user_guides/source_compile.html#docker)

|

||||

2. [Linux](https://paddle-lite.readthedocs.io/zh/latest/user_guides/source_compile.html#android)

|

||||

3. [MAC OS](https://paddle-lite.readthedocs.io/zh/latest/user_guides/source_compile.html#id13)

|

||||

4. [Windows](https://paddle-lite.readthedocs.io/zh/latest/demo_guides/x86.html#windows)

|

||||

4. [Windows](https://paddle-lite.readthedocs.io/zh/latest/demo_guides/x86.html#id4)

|

||||

|

||||

### 1.2 准备预测库

|

||||

|

||||

|

|

@ -84,7 +84,7 @@ Paddle-Lite 提供了多种策略来自动优化原始的模型,其中包括

|

|||

|

||||

|模型简介|检测模型|识别模型|Paddle-Lite版本|

|

||||

|-|-|-|-|

|

||||

|超轻量级中文OCR opt优化模型|[下载地址](https://paddleocr.bj.bcebos.com/deploy/lite/ch_det_mv3_db_opt.nb)|[下载地址](https://paddleocr.bj.bcebos.com/deploy/lite/ch_rec_mv3_crnn_opt.nb)|2.6.1|

|

||||

|超轻量级中文OCR opt优化模型|[下载地址](https://paddleocr.bj.bcebos.com/deploy/lite/ch_det_mv3_db_opt.nb)|[下载地址](https://paddleocr.bj.bcebos.com/deploy/lite/ch_rec_mv3_crnn_opt.nb)|develop|

|

||||

|

||||

如果直接使用上述表格中的模型进行部署,可略过下述步骤,直接阅读 [2.2节](#2.2与手机联调)。

|

||||

|

||||

|

|

|

|||

|

|

@ -3,7 +3,7 @@

|

|||

|

||||

This tutorial will introduce how to use paddle-lite to deploy paddleOCR ultra-lightweight Chinese and English detection models on mobile phones.

|

||||

|

||||

addle Lite is a lightweight inference engine for PaddlePaddle.

|

||||

paddle-lite is a lightweight inference engine for PaddlePaddle.

|

||||

It provides efficient inference capabilities for mobile phones and IOTs,

|

||||

and extensively integrates cross-platform hardware to provide lightweight

|

||||

deployment solutions for end-side deployment issues.

|

||||

|

|

@ -17,7 +17,7 @@ deployment solutions for end-side deployment issues.

|

|||

[build for Docker](https://paddle-lite.readthedocs.io/zh/latest/user_guides/source_compile.html#docker)

|

||||

[build for Linux](https://paddle-lite.readthedocs.io/zh/latest/user_guides/source_compile.html#android)

|

||||

[build for MAC OS](https://paddle-lite.readthedocs.io/zh/latest/user_guides/source_compile.html#id13)

|

||||

[build for windows](https://paddle-lite.readthedocs.io/zh/latest/demo_guides/x86.html#windows)

|

||||

[build for windows](https://paddle-lite.readthedocs.io/zh/latest/demo_guides/x86.html#id4)

|

||||

|

||||

## 3. Download prebuild library for android and ios

|

||||

|

||||

|

|

@ -155,7 +155,7 @@ demo/cxx/ocr/

|

|||

|-- debug/

|

||||

| |--ch_det_mv3_db_opt.nb Detection model

|

||||

| |--ch_rec_mv3_crnn_opt.nb Recognition model

|

||||

| |--11.jpg image for OCR

|

||||

| |--11.jpg Image for OCR

|

||||

| |--ppocr_keys_v1.txt Dictionary file

|

||||

| |--libpaddle_light_api_shared.so C++ .so file

|

||||

| |--config.txt Config file

|

||||

|

|

|

|||

|

|

@ -0,0 +1,77 @@

|

|||

# Copyright (c) 2020 PaddlePaddle Authors. All Rights Reserved.

|

||||

#

|

||||

# Licensed under the Apache License, Version 2.0 (the "License");

|

||||

# you may not use this file except in compliance with the License.

|

||||

# You may obtain a copy of the License at

|

||||

#

|

||||

# http://www.apache.org/licenses/LICENSE-2.0

|

||||

#

|

||||

# Unless required by applicable law or agreed to in writing, software

|

||||

# distributed under the License is distributed on an "AS IS" BASIS,

|

||||

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

||||

# See the License for the specific language governing permissions and

|

||||

# limitations under the License.

|

||||

|

||||

from paddle_serving_client import Client

|

||||

import cv2

|

||||

import sys

|

||||

import numpy as np

|

||||

import os

|

||||

from paddle_serving_client import Client

|

||||

from paddle_serving_app.reader import Sequential, ResizeByFactor

|

||||

from paddle_serving_app.reader import Div, Normalize, Transpose

|

||||

from paddle_serving_app.reader import DBPostProcess, FilterBoxes

|

||||

if sys.argv[1] == 'gpu':

|

||||

from paddle_serving_server_gpu.web_service import WebService

|

||||

elif sys.argv[1] == 'cpu'

|

||||

from paddle_serving_server.web_service import WebService

|

||||

import time

|

||||

import re

|

||||

import base64

|

||||

|

||||

|

||||

class OCRService(WebService):

|

||||

def init_det(self):

|

||||

self.det_preprocess = Sequential([

|

||||

ResizeByFactor(32, 960), Div(255),

|

||||

Normalize([0.485, 0.456, 0.406], [0.229, 0.224, 0.225]), Transpose(

|

||||

(2, 0, 1))

|

||||

])

|

||||

self.filter_func = FilterBoxes(10, 10)

|

||||

self.post_func = DBPostProcess({

|

||||

"thresh": 0.3,

|

||||

"box_thresh": 0.5,

|

||||

"max_candidates": 1000,

|

||||

"unclip_ratio": 1.5,

|

||||

"min_size": 3

|

||||

})

|

||||

|

||||

def preprocess(self, feed=[], fetch=[]):

|

||||

data = base64.b64decode(feed[0]["image"].encode('utf8'))

|

||||

data = np.fromstring(data, np.uint8)

|

||||

im = cv2.imdecode(data, cv2.IMREAD_COLOR)

|

||||

self.ori_h, self.ori_w, _ = im.shape

|

||||

det_img = self.det_preprocess(im)

|

||||

_, self.new_h, self.new_w = det_img.shape

|

||||

return {"image": det_img[np.newaxis, :].copy()}, ["concat_1.tmp_0"]

|

||||

|

||||

def postprocess(self, feed={}, fetch=[], fetch_map=None):

|

||||

det_out = fetch_map["concat_1.tmp_0"]

|

||||

ratio_list = [

|

||||

float(self.new_h) / self.ori_h, float(self.new_w) / self.ori_w

|

||||

]

|

||||

dt_boxes_list = self.post_func(det_out, [ratio_list])

|

||||

dt_boxes = self.filter_func(dt_boxes_list[0], [self.ori_h, self.ori_w])

|

||||

return {"dt_boxes": dt_boxes.tolist()}

|

||||

|

||||

|

||||

ocr_service = OCRService(name="ocr")

|

||||

ocr_service.load_model_config("ocr_det_model")

|

||||

if sys.argv[1] == 'gpu':

|

||||

ocr_service.set_gpus("0")

|

||||

ocr_service.prepare_server(workdir="workdir", port=9292, device="gpu", gpuid=0)

|

||||

elif sys.argv[1] == 'cpu':

|

||||

ocr_service.prepare_server(workdir="workdir", port=9292)

|

||||

ocr_service.init_det()

|

||||

ocr_service.run_debugger_service()

|

||||

ocr_service.run_web_service()

|

||||

|

|

@ -0,0 +1,78 @@

|

|||

# Copyright (c) 2020 PaddlePaddle Authors. All Rights Reserved.

|

||||

#

|

||||

# Licensed under the Apache License, Version 2.0 (the "License");

|

||||

# you may not use this file except in compliance with the License.

|

||||

# You may obtain a copy of the License at

|

||||

#

|

||||

# http://www.apache.org/licenses/LICENSE-2.0

|

||||

#

|

||||

# Unless required by applicable law or agreed to in writing, software

|

||||

# distributed under the License is distributed on an "AS IS" BASIS,

|

||||

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

||||

# See the License for the specific language governing permissions and

|

||||

# limitations under the License.

|

||||

|

||||

from paddle_serving_client import Client

|

||||

import cv2

|

||||

import sys

|

||||

import numpy as np

|

||||

import os

|

||||

from paddle_serving_client import Client

|

||||

from paddle_serving_app.reader import Sequential, ResizeByFactor

|

||||

from paddle_serving_app.reader import Div, Normalize, Transpose

|

||||

from paddle_serving_app.reader import DBPostProcess, FilterBoxes

|

||||

if sys.argv[1] == 'gpu':

|

||||

from paddle_serving_server_gpu.web_service import WebService

|

||||

elif sys.argv[1] == 'cpu':

|

||||

from paddle_serving_server.web_service import WebService

|

||||

import time